Scale Unstructured Text Analytics with Efficient Batch LLM Inference

Unstructured text is everywhere in business: customer reviews, support tickets, call transcripts, documents. Large language models (LLMs) are transforming how we extract value from this data by running tasks from categorization to summarization and more. While AI has proved that real-time conversations in natural language are possible with LLMs, extracting insights from millions of unstructured data records using these LLMs can be a game changer. This is where batch LLM inference becomes essential.

In this post, you will gain insight into common business use cases for large-scale text data analytics. You’ll also discover why deploying batch LLM pipelines can be challenging and how Snowflake has optimized Snowflake Cortex AI for batch inference via SQL functions.

What are common batch LLM inference jobs?

Different teams in an organization can leverage batch LLM inference to extract insights from large volumes of text data. Customer intelligence teams analyze reviews and forum comments to identify sentiment trends, while support teams process tickets to uncover product issues and inform gaps in a product roadmap. Meanwhile, operations teams use entity extraction on documents to automate workflows and enable metadata-driven analytical filtering. Here are a few more examples of how different teams can use LLMs to extract insights from large volumes of unstructured text data:

Text classification and tagging: Automatically categorizing support tickets, emails, news articles or product reviews based on sentiment, topic or urgency.

Entity extraction: Extracting key entities (names, dates, locations, financial figures) from contracts, invoices or medical records to transform unstructured text into structured data.

Sentiment and trend analysis: Analyzing customer feedback, survey responses or social media discussions at scale to detect trends, measure sentiment and inform business decisions.

Content moderation: Scanning large data sets (social media posts, chat logs, customer feedback) for policy violations, harmful content or regulatory compliance issues.

Document summarization: Generating concise summaries for large volumes of reports, research papers, legal documents or meeting transcripts.

Document RAG preparation: Ingesting, cleaning and chunking documents before embedding them into vector representations, enabling efficient retrieval and enhanced LLM responses in retrieval-augmented generation (RAG) systems.

Text data quality: Validating multiple text fields such as form fills by providing context on ideal input combinations, which enables LLMs to detect anomalies and incorrect records to improve data quality.

Feature engineering: Extracting, categorizing and transforming unstructured text into structured features, enhancing machine learning models with enriched context and insights.

Why efficient batch LLM pipelines matter

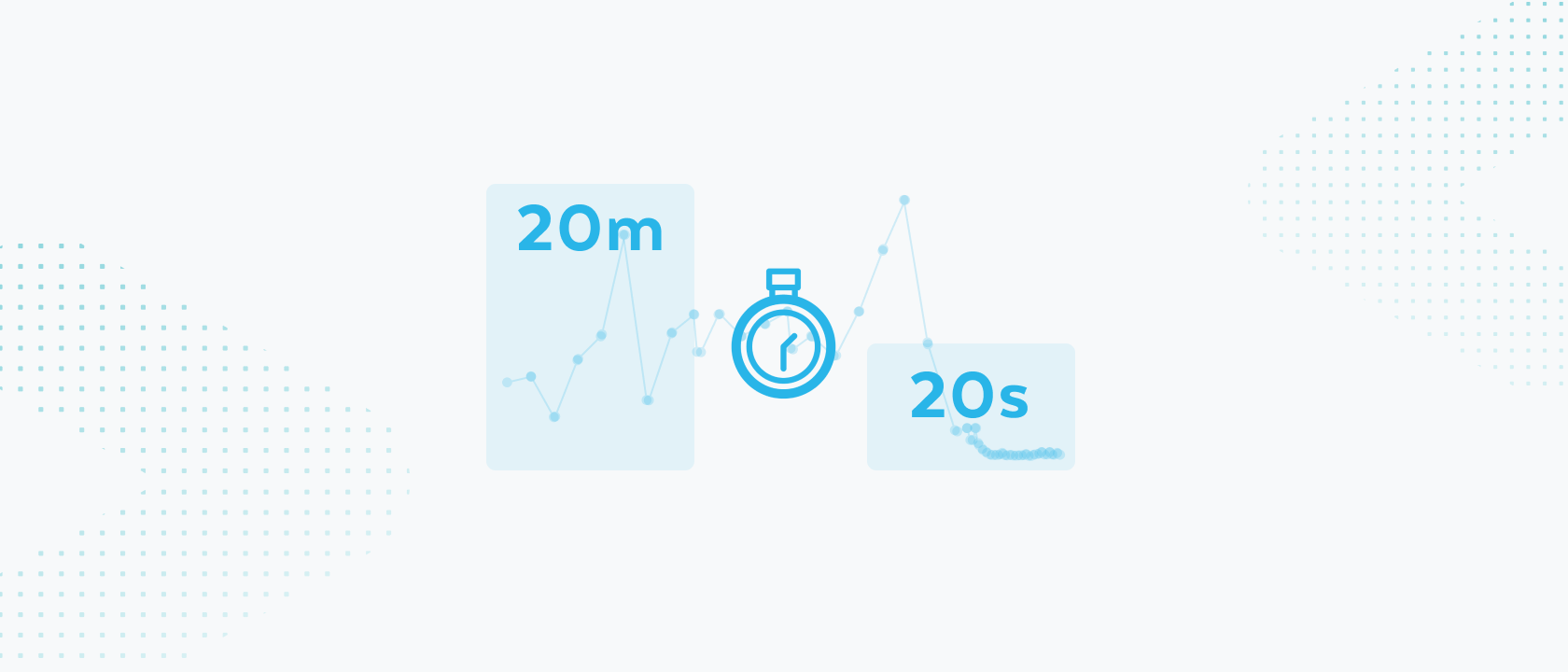

"LLMs are changing the workplace" is more than just a tag line. Consider this: Categorizing 10,000 support tickets would take even your fastest employee about 55 hours (at 20 seconds per ticket). With an optimized LLM pipeline, the same task takes minutes. This isn't incremental improvement — it's a transformational efficiency gain that saves thousands of labor hours and dramatically accelerates response times.

As data volumes grow and AI automation expands, cost efficiency in processing with LLMs depends on both system architecture and model flexibility. An efficient batch processing system scales in a cost-effective manner to handle growing volumes of unstructured data. Being able to flexibly switch LLMs helps businesses optimize costs by right-sizing models for each use case and easily upgrading as models improve.

And to create significant technology and team efficiencies, organizations need to consider opportunities to integrate LLM pipelines with existing structured data workflows. Expanding existing investments around pipeline management, processing and orchestrations simplifies architecture and reduces operational complexity from integration and infrastructure maintenance work. This unification can also empower data engineers, who already manage structured pipelines, to easily onboard and maintain unstructured data workflows.

Efficiently run batch inference pipelines with Cortex AI

Headset switched one of its batch categorization pipelines, which had been running with a leading LLM API inference provider (Fireworks AI), and saw job execution go from 20 minutes to 20 seconds by using the Snowflake Cortex COMPLETE inference function.

Using the Snowflake Cortex COMPLETE function, developers can run batch LLM inference with SQL functions that do not require intermediary databases or lambdas to achieve reliable, high-throughput processing with flexible model choice.

Other customer success stories

By using Snowflake, Nissan accelerated its project timeline by two months for a customer intelligence application that analyzes customer sentiment from reviews and forums to help dealerships enhance their product and services offerings. Watch on-demand webinar.

Skai deployed a categorization tool in just two days to help its customers get better insights about purchasing patterns by building categories that make sense across multiple ecommerce platforms. Read the case study.

Find more stories in our customer success ebook.

Get started

Check out these resources, and stay tuned for updates on serverless inference for more data types.